How to leverage Kubernetes to modernize your legacy enterprise application infrastructure

The biggest question facing many businesses today is how to improve the efficiency and agility of their application delivery processes. Along the journey to infrastructure modernization, do they continue to maintain applications as-is, or do they need to be migrated, upgraded or replaced? This blog attempts to answer some of these questions.

Take any popular enterprise app, like your Oracle business suite, or even a custom-built application, and it will typically follow a three-tier architecture:

- It will be built on Java, .NET, Ruby, or similar application logic

- There would be a database server maintaining persistent data

- There would be a monolithic application server and web- or mobile-based clients

The problem with many traditional applications is that they support only vertical scaling (to improve parameters like speed and memory) instead of horizontal scaling (with large clusters and multiple nodes, often on commercial off-the-shelf hardware). To add to the woes, almost every tier would need a specialist team to manage and operate them. They would each require different infrastructures, tools, and processes. Databases would ideally be built on bare-metal infrastructure to give better control and support maximized performance. Application servers, like JBoss, IBM WebSphere, or WebLogic would be virtualized on popular hypervisors like VMWare. Each of these tiers would also have its own tooling for backup, cloning, patching, failover, DR, and so on.

With divergent considerations and every team working on its own set of policies and processes, coordination can take weeks. It can take months to roll out a new version or add a new capability.

This type of application delivery approach is in dire need of modernization. Two paradigms, in particular, are driving this transformation.

- The need to go to market faster: Firstly, an enterprise today works on the notion that it wants to accelerate time to market, drive innovation, and reduce costs. In order to move applications across the development stage to testing and production faster, there is a need for delivering cloud-like agility on on-premise infrastructure. This adds more efficiency and enables enterprises to have the agility to roll out applications faster. Evidently, to achieve all of this, automation has to be at the forefront and it needs to cover operational, process-related, and end-to-end life cycle management of the deployments.

- The need to build new innovative products and services faster: Analytics is becoming a top priority for organizations. As we have often seen in the stock market or retail spheres, there is a need for exceptional tooling that delivers real-time, often predictive insights at breakneck speed. This calls for simplified orchestration capabilities to deploy and manage complex distributed apps, like data pipelines or application stacks, and the freedom for developers and operations teams to mix and choose the tools and libraries they like to rapidly snapshot, clone, or restore data.

But how can businesses be faster, better, and still cut costs? The good news is that there is an opportunity to modernize application delivery processes and infrastructure without containerization or redesign and replacement of existing applications.

The core of innovation is the freedom to experiment in an agile manner. Automation fuels the journey from development to testing and production. An effective automation chain also increases self-service capabilities exponentially. At some point, some form of consolidation is needed to reduce hardware and software footprint without impacting performance.

The importance of modern, containerized infrastructure

This is where containers come in. Ephemeral and ever-evolving, containers give developers unlimited freedom of choice in working with databases, libraries, programming languages, and so on. Applications are typically broken down into microservices and each component is containerized, bringing great freedom and agility to even the most complex of architectures. Let’s look at an example for comparison: VMWare is a dominant player in the on-prem virtualization space. However, if you’re trying to migrate to public cloud, your cloud vendors may prefer to use hypervisors like Xen or KVM that are preferred in this space. This complexity means added migration time and cost. Containerization eliminates such challenges.

There are other advantages as well:

- Containers are lightweight and high-performing; they have near zero performance cost, especially since all libraries stem from the Linux kernel almost like an extension of the base OS.

- Process isolation facilitates multitenancy on bare-metal servers with minimum interference between instances.

- Most containers have a lower footprint, which leads to higher consolidation density than VMs and simplified operations by eliminating OS or VM sprawl enabling higher utilization.

- Container management platforms, like Robin.io, include automation and operational efficiencies that support both VM legacy workloads and containerized workloads. This allows you to get the benefits of containerized infrastructure without having to containerize all of your legacy applications – a potentially mammoth undertaking.

How to containerize your enterprise applications

Currently, enterprises are spending a lot of time and effort in developing, testing, and deploying applications. When it comes to your modernization journey, adopting a customized SaaS solution to replace the legacy enterprise application could seem like the simplest solution. But this could end up consuming a lot of your development team’s time in developing and testing configurations, third-party integrations, and so on, unless the SaaS solution is provided by the same vendor. On the other end of the spectrum is the option to completely rewrite applications – an extremely efficient, but effort-intensive, time-consuming, and expensive activity.

Enterprises can find a middle ground in a simple “lift-and-shift” strategy that containerizes the application by its respective components and deploys them as a full-fledged application that spans multiple containers. In such a scenario, the development time would be minimal because you are retaining the same interfaces and libraries. Testing time too is negligible, needed to ensure adequate communication between components and to configure the right network and storage. The only additional cost incurred during deployment is when moving from bare-metal to say, the cloud, where developers may need to choose a newer storage stack, consider virtualized networking options, and so on.

Containers help you package your dependencies and your software in independent units. They are easy to deploy and the operations team does not need to worry about installing libraries and satisfying dependencies. Containers are run within their own isolated processes, so there is no possibility of your critical applications conflicting with each other. For long, containerization has ignored stateful, enterprise, and big data applications. Now, with platforms like Robin.io this too has become possible.

Unlock the power of containerization with Robin.io

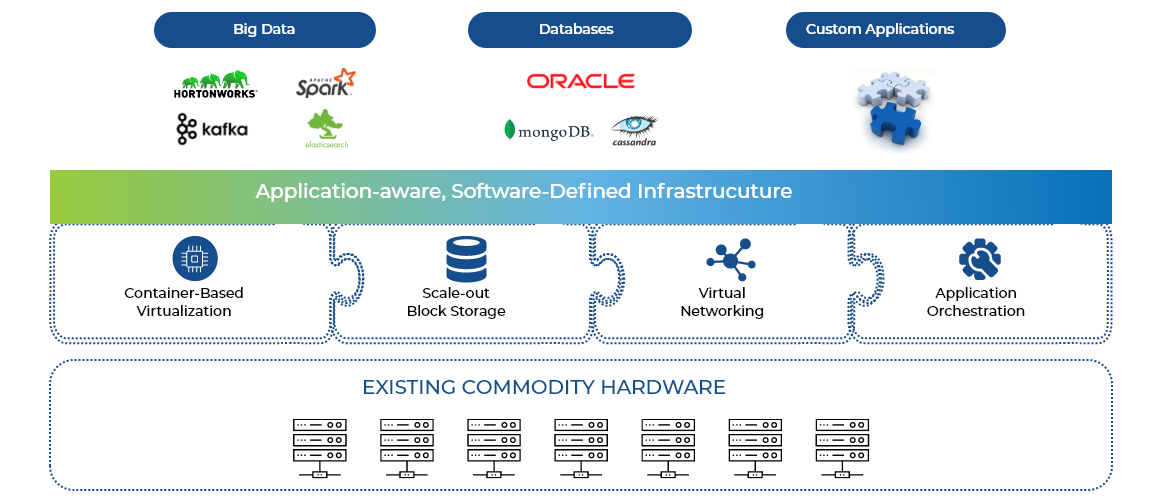

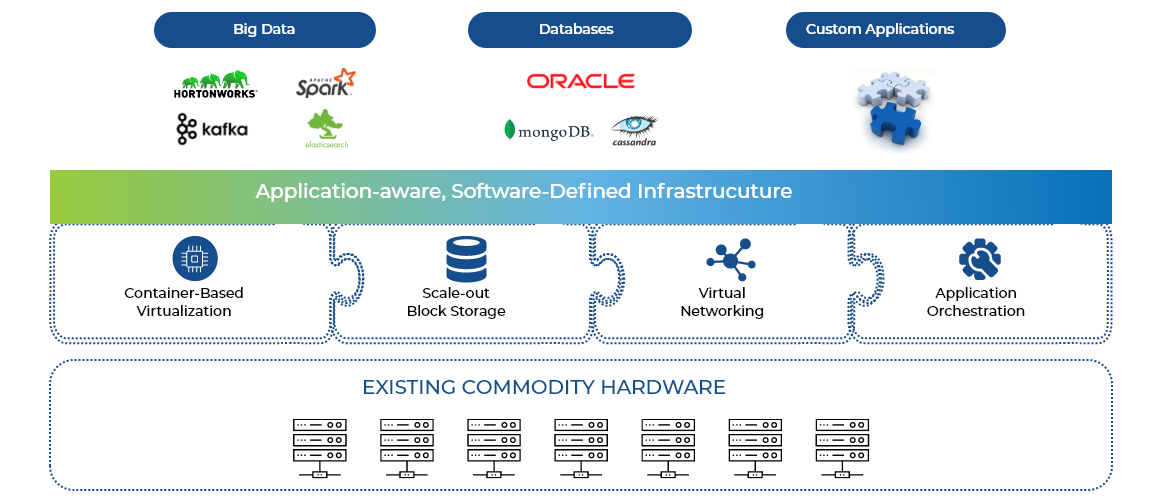

A typical enterprise application needs multi-host networking, performance-intensive storage, and orchestration for complex components. Robin.io provides an application-aware, software-defined infrastructure. It is a single software binary that includes:

- Container-based virtualization

- Software-defined, scale-out block storage

- Virtual networking

- Application and infrastructure orchestration

- Deployments of containerized or VM-based workloads on cloud-native infrastructure

Robin.io functions with the goal of simplifying application management, guaranteeing predictable performance, and improving utilization. You can run all your custom or big data applications, and traditional databases on it.

Robin.io essentially provides an additional layer of abstraction, enabling an application to migrate without the complexities of rearchitecting and rewriting. It handles state management issues on behalf of migrated applications, handling the interactions with the abstracted storage while taking care of issues like rollbacks and DR.

Robin.io is architected to address the needs of customers looking to implement a hybrid strategy or migrate from on-premises data centers to the cloud. It can help you run mission-critical applications with confidence, deliver new products and features faster, collaborate better across teams, and avoid vendor/infrastructure lock-ins – not to mention the performance benefits.

In addition, Robin.io can:

- Protect applications through snapshot and rollback capabilities for all of the data and configurations

- Create test instances with a single click

- Clone complete apps with the option to allocate infrastructure resources on the go through the Robin.io interface

App as a service

The demands of applications from the available infrastructure are often complex – as is the rightsizing and scaling of the deployed applications. This is often why customers see a very low utilization in their infrastructure. One key benefit of a cloud-native application is the ability to scale the applications horizontally and vertically on demand or automatically using performance KPIs.

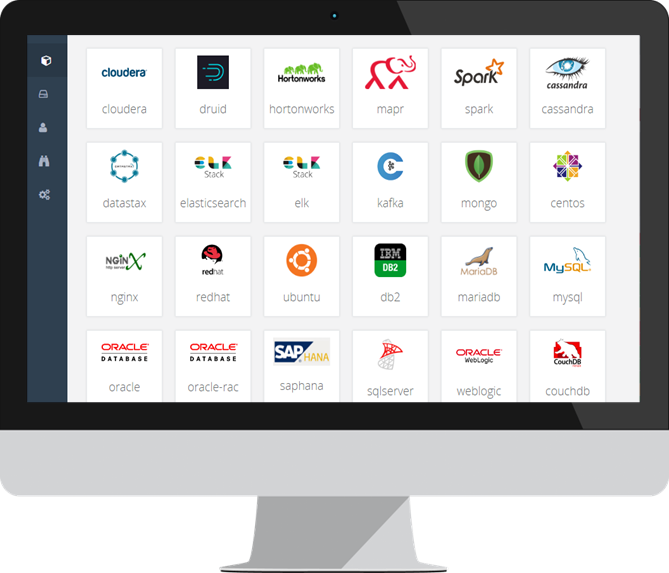

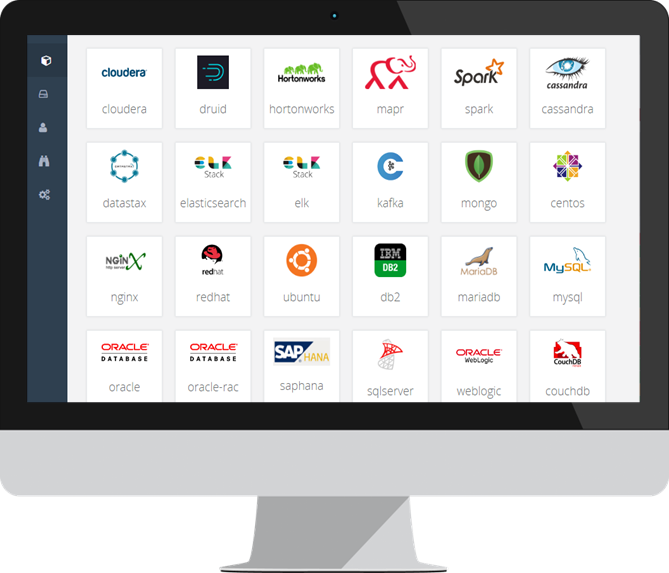

Robin.io provides a rich set of automation capabilities to enable you to specify the application requirements, change them, and deploy based on the specifications. With it, the customer will benefit from an app-store-like experience, accelerating the deployment of applications on-demand.

If you’re looking to know more about how Robin.io can accelerate your containerization or application modernization roadmap, connect with us.